ROBOTICS VISION MODULE

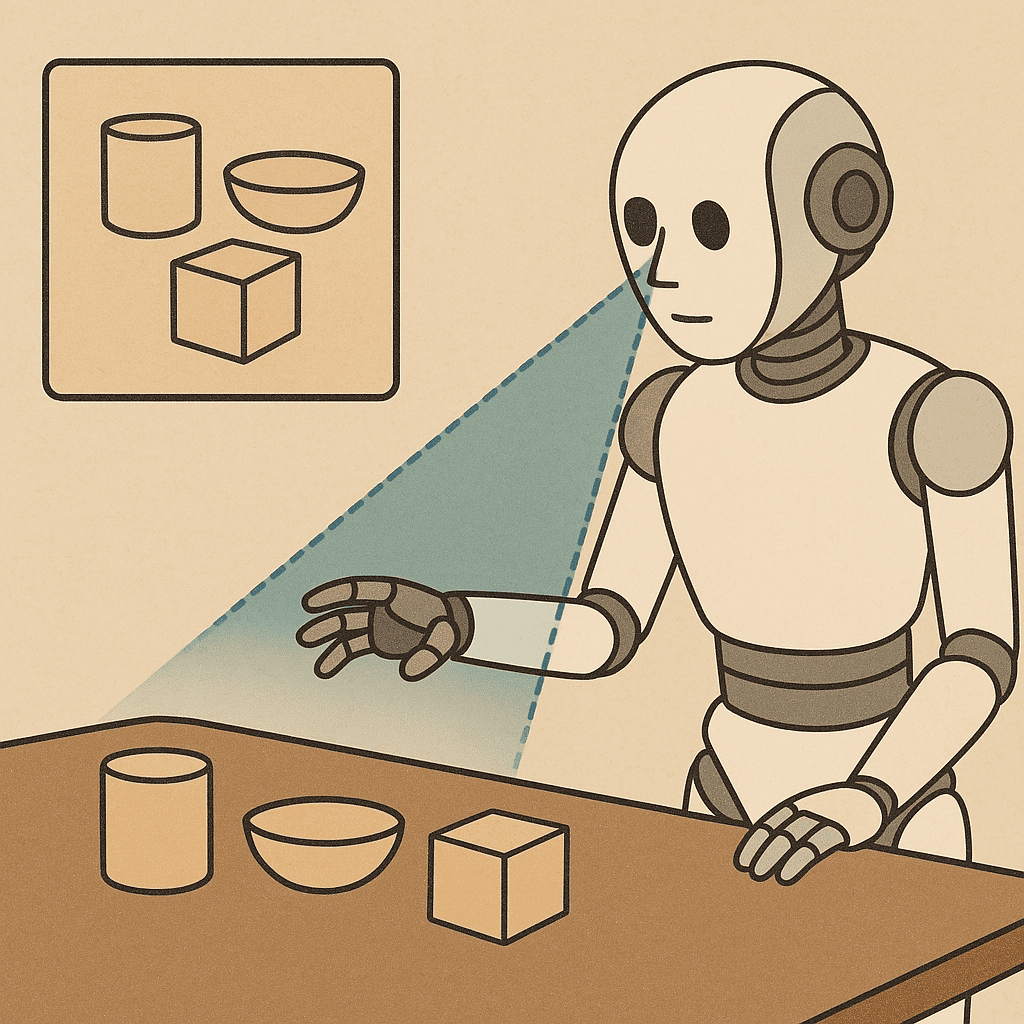

This module gives an introduction to computer vision methods in Human-Robot Collaboration. First, we present the fundamentals of artificial vision. Then, we deepen some methods for performing one of the most important visual robotic tasks, object detection, by “classical” and deep learning methods. By completing these fundamentals with 3D vision concepts, we describe in detail two advanced applications: speed-and-separation monitoring and activity recognition.

Open Access Educcation pilot

Lesson Description

Why is vision useful in collaborative robotics?

We give a first peek into this question by presenting the course outline.

Introduction (Lesson 1): Why is vision useful in collaborative robotics? We give a first peek into this question by presenting the course outline.

Keywords: introduction, vision, HRC

Computer vision fundamentals (Lesson 2): We cover the fundamentals of perspective vision, including how to calibrate a camera to measure positions in a robotic system. Finally, we present some basics of image processing

Keywords: perspective, camera calibration, image processing

2D Object detection (Lesson 3): Manipulating (or avoiding) objects is one of the most common duties for a robotic system. We describe some simple methods for detecting objects in 2D images, localizing them, and picking them up.

Keywords: object detection, pose estimation, manipulation

Object detection with Deep Learning (Lesson 4): We elaborate on object detection, by presenting data-hungry but powerful methods for recognizing and localizing objects in high-variability contexts.

Keywords: deep learning, object detection

3D vision (Lesson 5): Perspective vision is rich of information and broadly used, but it fails when we need to measure positions in 3D. We present the main 3D vision technologies, and how we can use them in a robotic manipulation system.

Keywords: 3D vision, camera calibration, sensors

Object detection with Deep Learning (Lesson 6): When humans are present in the robot’s workspace, safety becomes the top priority. We leverage 3D vision and deep learning to solve the speed-and-separation monitoring task, which is a critical safety criterion regulated by ISO/TS 15066 on Collaborative robots.

Keywords: speed-and-separation, point cloud, semantic segmentation

Activity recognition (Lesson 7): To achieve a higher level of decision-making capability, robots should be able to understand what humans are doing next to them. We outline some methods for classifying activities performed by humans, using 2D, 3D vision, and deep learning.

Keywords: vision, deep learning, activity recognition

Outro (Lesson 8): Artificial vision can do this and much more for human-robot collaboration. To conclude the course, we present some emerging applications of vision in robotics.

Keywords: summary, frontier AI

Learn more

Contact Ernest for more information